CERTAIN Project Showcased at Dagstuhl Seminar

Dagstuhl, Germany – July, 2025 – The CERTAIN project recently had the opportunity to present at a significant seminar held at the renowned Dagstuhl Research Center for Informatics. It was a valuable occasion to connect with leading experts and contribute to important discussions on AI governance and compliance.

Exploring Policy’s Role in Sociotechnical Systems

The Dagstuhl Seminar’s primary focus was to explore how policies can effectively govern complex sociotechnical systems, aiming to balance agent autonomy with system-wide control. Key challenges addressed included:

- Designing system architectures that clearly define agent assumptions and guarantees.

- Developing formal models and semantics for policy expression.

- Enabling robust reasoning and monitoring capabilities over policies.

- Designing adaptive methodologies for policy specification and evolution as AI advances.

The seminar brought together a diverse group of participants, blending perspectives from computing, law, public administration, and social sciences. It drew on established disciplines such as the Semantic Web, Knowledge Representation, Deontic Logic, and Multiagent Systems.

CERTAIN’s Contribution to Operationalizing AI Regulations

A key part of our participation involved a breakout group focused on the technical representation of policies and standards. Here, the CERTAIN project served as a relevant example of how major regulatory requirements – like those from the EU AI Act – can be translated into practical, operational steps. Discussions highlighted how policy-relevant metadata and ontologies are fundamental for building effective automated compliance verification.

Unpacking CERTAIN: Our Approach to Traceable and Compliant AI

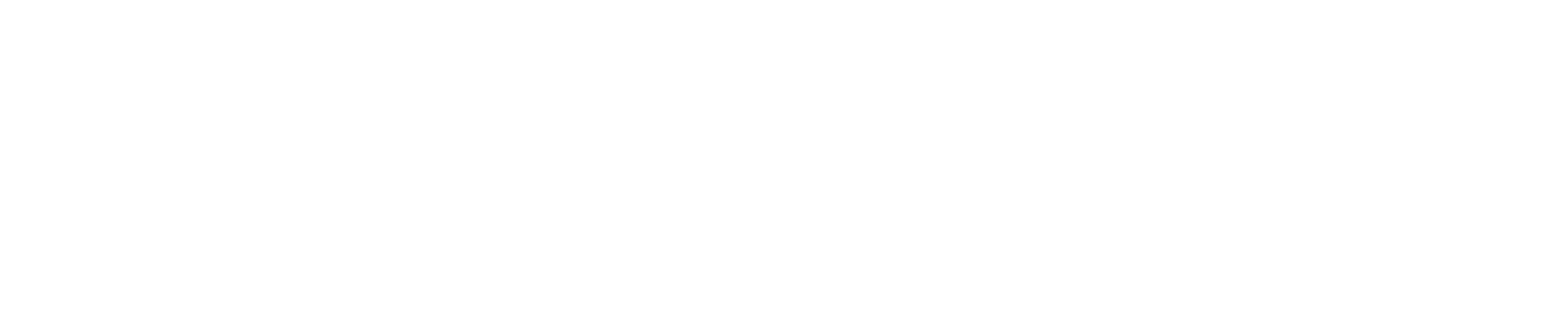

A notable segment of the seminar was the presentation by Mr. Sebastian Neumaier from St. Pölten University of Applied Sciences, Austria. His talk, titled “CERTAIN: Towards Traceability and Regulatory Compliance in AI,” was well-received.

Sebastian outlined the clear need for robust frameworks that ensure both regulatory compliance and ethical integrity, especially as AI systems become increasingly integrated into critical sectors. He detailed the core objectives of the CERTAIN project, emphasizing our work to develop a comprehensive framework for traceability and compliance checking within the evolving European Union regulatory landscape.

He specifically highlighted two key innovations driving our work: the integration of a Semantic MLOps Engine and a RegOps Engine:

-

Our Semantic Engine supports the entire AI lifecycle tracking through advanced ontologies.

-

The RegOps Engine facilitates compliance assessment by querying collected information within a dedicated knowledge graph that captures the AI development and deployment process.

The presentation led to insightful discussions. Participants explored how CERTAIN’s goals directly align with the seminar’s core themes, engaging in valuable conversations about decentralized system governance, ODRL-inspired policy semantics, and the development of verifiable policy modeling in complex sociotechnical ecosystems.

Advancing Responsible AI Collaboration

Our presence at the Dagstuhl seminar underscores the CERTAIN project’s commitment to engaging with leading experts and contributing to the global discourse on responsible AI development and deployment.

We value the insights gained and look forward to continuing these important collaborations as we advance our research.